Building a local AI agent sounds great until you try to use one all day. The hard part isn’t getting a model to understand you, it’s getting it to choose the right tool and do it fast enough that the experience feels interactive. In regulated industries, the bar is even higher: the agent also cannot send your data anywhere to do it. This is where LFM2-24B-A2B shines: it’s designed for tool dispatch on local consumer hardware, where latency, memory and privacy aren’t abstract constraints; they decide whether your agent is a product or a demo.

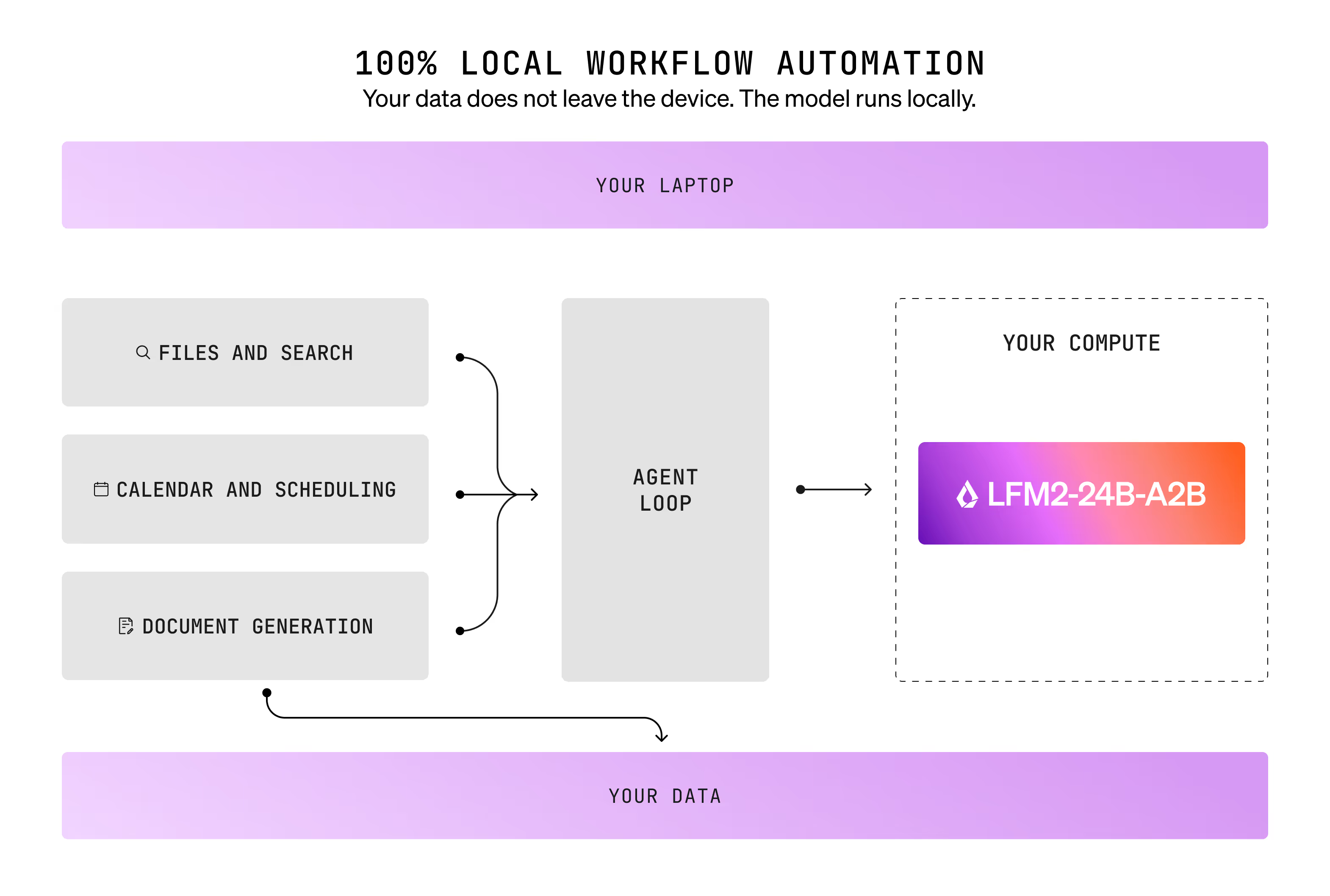

We built an open-source desktop agent called LocalCowork to put this to test. It runs entirely on-device: the model, the tools, and all your data stay on your laptop. No cloud, no API keys, nothing leaves the machine. You can find the source code of this app in our Cookbook.

Here's what we found.

The use case: an on-device agent that acts on your files

The job of a laptop agent is simple: take an intent and turn it into an action. That means selecting from real tool surfaces like:

- Security scanning - find leaked API keys and personal data buried in your folders

- Audit trails - log every tool call, generate compliance reports

- Document processing - extract text, diff contracts, generate PDFs

- File operations - list, read, search across your filesystem

- System & clipboard - get system info, read/write clipboard, check disk usage

In practice, this is harder than it looks because tool menus are large and crowded. We evaluated LFM2-24B-A2B in exactly that environment: 67 tools across 13 MCP servers, spanning the categories above.

The setup: local, realistic, and scaled

Everything ran on a single laptop:

- Apple M4 Max, 36 GB unified memory, 32 GPU cores

- Workload: 100 single-step tool selection prompts + 50 multi-step chains (3–6 steps)

- Serving: llama-server, Q4_K_M GGUF, flash attention enabled

- Inference: temperature 0.1, top_p 0.1, max_tokens 512

The goal wasn’t to see how good the model could look in a toy environment. It was to measure whether it can drive a real tool-using UX on a machine you can buy and carry.

The demo shows three workflows running entirely on a laptop - no internet required:

- Instant Security Scan and Transparent Audit Trail: A developer can command LocalCowork to scan a folder for leaked secrets. The model immediately uses the security scanner to identify credentials (e.g., AWS, Stripe API keys) and logs all actions. A subsequent request for the "audit trail" triggers a call to a different server, which rapidly provides a complete log of the scan, including what was found and when. This showcases successful, sub-second chaining of two tool calls across two distinct Model Context Protocol (MCP) servers.

- System Information with Full Tool-Call Transparency: When a user clicks "Tell me about my system," the model instantly dispatches the request to the system info tool. The accompanying demo can expand the tool call trace, offering full visibility into the process: which tool was invoked, the parameters passed, and the resulting output. This emphasizes a commitment to a non-black-box operation.

- Cross-Server Clipboard Access: In a new session on a different server, the model retrieves the clipboard content sub-second upon request. The core demonstration is not the clipboard function itself, but the model's ability to accurately and quickly route the request across 21 tools and 6 servers, all while running locally.

What it feels like: sub-second tool selection

On this laptop configuration, LFM2-24B-A2B averaged ~385 ms per tool-selection response while fitting in ~14.5 GB of memory in this setup. That means no data leaves the device during inference, no outbound API call, no vendor subprocessor, no egress event to audit.

It reached 80% accuracy on single-step tool selection across 100 prompts. It enables a simple, high-leverage interaction pattern:

- The user asks

- The agent proposes calling the tool immediately

- The user confirms (or corrects)

- The tool runs

- Repeat

When the loop is fast, even imperfect accuracy becomes extremely usable, because the cost of correction is low.

A real workflow: receipts → structured data → report

Here’s a representative real-world chain from the benchmark suite:

- Search a Receipts folder for images

- OCR the first receipt

- Parse vendor/date/items/total

- Check for duplicates

- Export reconciled data to CSV

- Flag anomalies

- Generate a PDF reconciliation report

This is the kind of workflow where tool-using agents fail in real life: not because any single step is exotic, but because the agent must repeatedly choose the correct action from a large tool set while carrying state across steps. LFM2’s strength is that it makes each step feel immediate.

Where LFM2 is strongest: structured domains

Single-step performance was strongest in categories where intent is clear and tool schemas are crisp:

What we learned

The biggest errors were predictable and can be addressed with standard post-training.

- Sibling confusion inside a server. Most wrong-tool selections were near-neighbor mistakes: choosing a related tool in the same family (e.g., listing when deletion was required).

- Occasional “no tool call”. A smaller portion of failures came from the model replying conversationally instead of emitting a tool call.

- Multi-step autonomy is harder than single-step dispatch. LFM2 completed 26% of multi-step chains end-to-end in this suite. The practical takeaway: LFM2 is best as a fast dispatcher in a guided loop, not a hands-off autopilot for long chains. But again, these can be significantly improved via targeted post-training.

LFM2-24B-A2B has been trained on 17T tokens so far, and pre-training is still running. When pre-training completes, expect an LFM2.5-24B-A2B with additional post-training and reinforcement learning.

Takeaway

The demo isn't a proof of concept. It's a working desktop app scanning real files, chaining real tools, with full audit logging, all running on consumer hardware. The model fits in 14.5 GB, dispatches tools in under 400 ms, and handles the structured domains that matter most for privacy-sensitive work.

If you're building local agents for consumer machines, LFM2-24B-A2B gives you a fast, memory-efficient foundation. With a standard fine-tuning step on your tool schemas, it can become a reliable and responsive solution for agentic tasks directly on your users' laptops.

LocalCowork is open-source and available in our Cookbook.

Try it and experience the power of private and low-latency AI yourself.

.svg)